Much of the conversation about enterprise AI adoption centers on model performance and productivity gains, but that framing overlooks a more stark reality. That is, AI is spreading inside organizations faster than governance programs can keep up.

A recent AI telemetry study looked at anonymized usage patterns across enterprise environments to understand how AI tools are actually introduced and used at work. The research found that rather than entering through formal procurement or IT deployment, most AI tools observed were introduced through employee-led signups and integrations. In using these tools on their own, what begins as experimentation quickly becomes embedded in daily workflows, often before security teams are aware that the tools even exist in their environments.

This pattern should feel familiar to many tech leaders because it mirrors the early rise of SaaS, when employees adopted cloud applications to solve immediate problems while centralized governance lagged behind. AI adoption is following a similar path, but with far greater speed and deeper integration into operational workflows.

Traditional enterprise software deployment follows a predictable path in that tools are evaluated, approved, procured, and deployed through centralized processes. Security and compliance teams assess risk before rollout, and governance controls are defined in advance. But AI adoption rarely follows this model.

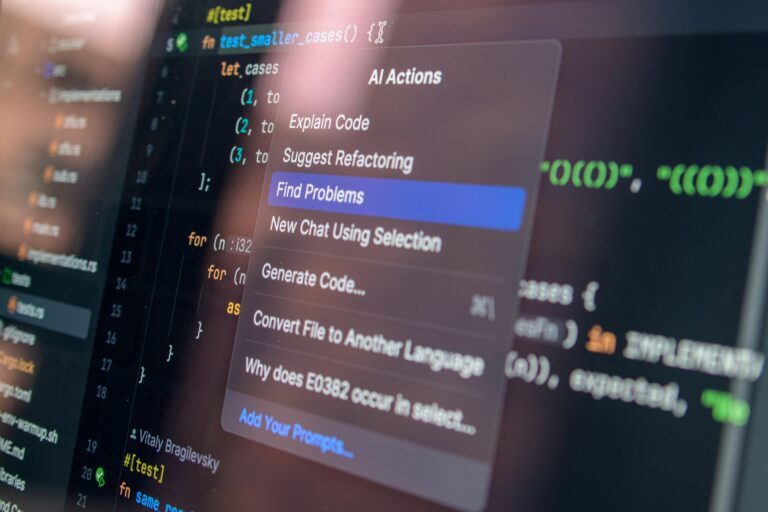

With AI, employees connect meeting intelligence tools to their calendars. Developers install coding assistants to accelerate troubleshooting. Marketing teams experiment with presentation generators. Customer support staff explore AI assistants to summarize cases and draft responses. These decisions are rarely coordinated. They are driven by a desire for immediate productivity gains and the reality of how easy it is for AI tools to be enabled simply through browser extensions, SaaS integrations, or even just with a quick account creation.

Because these tools can be activated in minutes and integrated directly into existing workflows, they bypass the traditional network-based checkpoints that governed earlier generations of enterprise software.

Reshaping the Enterprise Risk Model

The speed of AI adoption is showing us just how technology is entering the workplace today. Employees are no longer passive recipients of IT deployments; they are active participants in selecting and integrating tools that improve their work. But this bottom-up adoption model creates new forms of risk that we need to consider.

AI tools often request access to calendars, documents, repositories, and collaboration platforms. These integrations create trusted connections between systems, and when employees authorize them, they may be extending access to sensitive data and operational workflows without fully understanding the scope of permissions involved. Over time, these connections accumulate, and what begins as a single experiment can evolve into an ecosystem of integrations and automations that operate beyond traditional visibility controls. This risk doesn’t stop with the tool itself and extends to the access relationships created when AI systems are connected to enterprise data and workflows.

Many AI governance initiatives focus on approved tools and acceptable use policies, which are necessary, but they assume that AI usage begins with sanctioned deployment. In practice, adoption often begins outside formal approval processes. Browser extensions, personal accounts, and SaaS integrations can introduce AI capabilities without triggering procurement workflows. By the time a tool appears on an approved vendor list, it may already be embedded in multiple workflows.

Governance frameworks designed around centralized approval struggle to manage technology that enters through decentralized adoption. The challenge is not simply controlling which tools are used. It is understanding how they are connected, what data they can access, and how they are integrated into operational processes.

As AI tools become embedded in daily work, they evolve from standalone applications into workflow infrastructure. They summarize meetings, draft documents, retrieve information, trigger automations, and assist with decision-making. Consider a support team connecting an AI assistant to its ticketing system to accelerate response times. Within hours, resolution speed improves, and customer satisfaction rises. At the same time, the integration may grant the assistant access to historical case data, internal knowledge bases, and customer records. Without visibility into that connection, leaders might see gains in efficiency but also unknowingly expand data access pathways. This dynamic illustrates why AI adoption is not just a technology decision. It is an operational decision with implications for resilience, data governance, and business continuity.

Regaining Visibility

Attempting to block AI adoption outright isn’t realistic nor productive. Employees adopt these tools because they provide immediate value, and organizations that restrict access entirely risk driving usage further underground. A more effective approach focuses on guardrails and informed adoption.

Security and IT teams should start by identifying where AI tools are connected to enterprise systems and what permissions they hold. Monitoring third-party integrations and access grants provides insight into how AI is entering workflows. Governance should prioritize access relationships rather than simply tool approval because understanding what data an AI tool can retrieve or modify is more important than whether it appears on an approved list. Guardrails can guide safer adoption without slowing innovation. Clear permission guidance, scoped access controls, and periodic integration reviews help reduce risk while preserving productivity gains.

Equally important is equipping employees with context. Employees often have no idea that what they are doing is risky. When users understand the implications of granting access to calendars, repositories, or document systems, they make more informed decisions.

The trends we are seeing with AI adoption require today’s tech leaders to move beyond tool approval toward visibility into integrations and permissions. Governance must align with how technology is actually adopted rather than how organizations expect it to be deployed. The organizations that thrive will not be those that slow adoption, but those that understand how AI enters workflows and guide its use with clarity and intention.